Interesting videos, distilled.

Why building eval platforms is hard — Phil Hetzel, Braintrust

An eval platform starts as “a spreadsheet plus a for-loop,” but it quickly becomes a serious agent-quality data system. The real problem is not drawing a comparison UI. The hard part is supporting a continuous loop between offline evals and production observability while storing, searching, scoring, and analyzing enormous semi-structured agent traces. Phil’s

Top 10 NEW Open Source Claude Code Tools (May)

The video is a fast filter over the current open-source Claude Code / coding-agent ecosystem. The creator’s real argument is not “install every shiny repo.” It is: coding agents are becoming more useful when wrapped in small, purpose-built operating layers — brevity constraints, structured memory, video/frame extraction, design-system references, token/cost

Top 10 Claude Code Frontend Design Skills, Plugins, & CLIs

This video is a toolkit tour for one specific pain point: Claude Code can generate working frontend code, but its default visual taste is weak and repetitive. The creator’s useful thesis is that frontend quality improves when you stop asking the agent to invent taste from scratch and instead give it stronger design inputs: anti-pattern rules, design-system m

OpenAI Image 2 is Nuts. Here are 10 Ways to Use it.

The video argues that OpenAI / ChatGPT Images 2.0 has crossed an important threshold: it is no longer just “pretty good at pictures,” but strong enough for practical commercial workflows where text, realism, layout, product detail, and visual editing used to break image models. Nate’s main claim is not that GPT Image 2 wins every prompt. It is that, across m

LLM codegen fails and how to stop 'em — Danilo Campos, PostHog

Autonomous codegen works when you stop treating the model as a magic programmer and start treating it as a capable but context-hungry agent that needs fresh documentation, good examples, sequenced instructions, constrained tools, and feedback loops. Danilo’s strongest claim is that the PostHog Wizard succeeds not because it is mostly clever code, but because

I Gave OpenClaw $10,000 to Trade Stocks

The video is a real-money stress test of autonomous AI agents: can OpenClaw run a trading strategy with $10,000 for 30 days, monitor markets, adjust positions, and communicate progress with minimal human intervention? The honest answer from the video is: it can operate autonomously, place and manage trades, and adapt its strategy — but autonomy is not the sa

How To De-Slop A Codebase Ruined By AI (with one skill)

AI does not make code architecture irrelevant. It makes architecture debt compound faster. If agents repeatedly change a codebase without understanding its module boundaries, they create duplicated rules, weak seams, and shallow abstractions. The cure is not “use less AI”; it is to make the architecture more legible to both humans and agents through deep mod

How to Build 24/7 Claude Agents. Easy.

Claude Code routines turn Claude from a local, laptop-dependent coding assistant into a remotely triggered automation worker. You can schedule it, call it from APIs/webhooks/GitHub events, give it a repo and cloud environment, and let it run one-shot agent tasks without keeping your computer open. The video’s deeper point is that “remote agents” require diff

Hermes Agent Just 10x’d Everyone’s Claude Code

The video argues for a “personal agent on a VPS” workflow: run Hermes as the always-on orchestrator, connect it to chat surfaces like Discord, give it Claude Code as a coding worker, then wire GitHub and Vercel so plain-English messages can become deployed software changes. The strongest version of the idea is not “fire humans and vibe deploy everything.” It

Claude Design 2 HOUR COURSE (Beginner to Pro)

This is a long practical walkthrough of Claude Design as a design-production environment: use normal Claude for strategy and thinking, then use Claude Design when you need visual artifacts — design systems, pitch decks, landing pages, app prototypes, and launch videos. The recurring lesson is not “just prompt harder.” It is: prepare context outside the expen

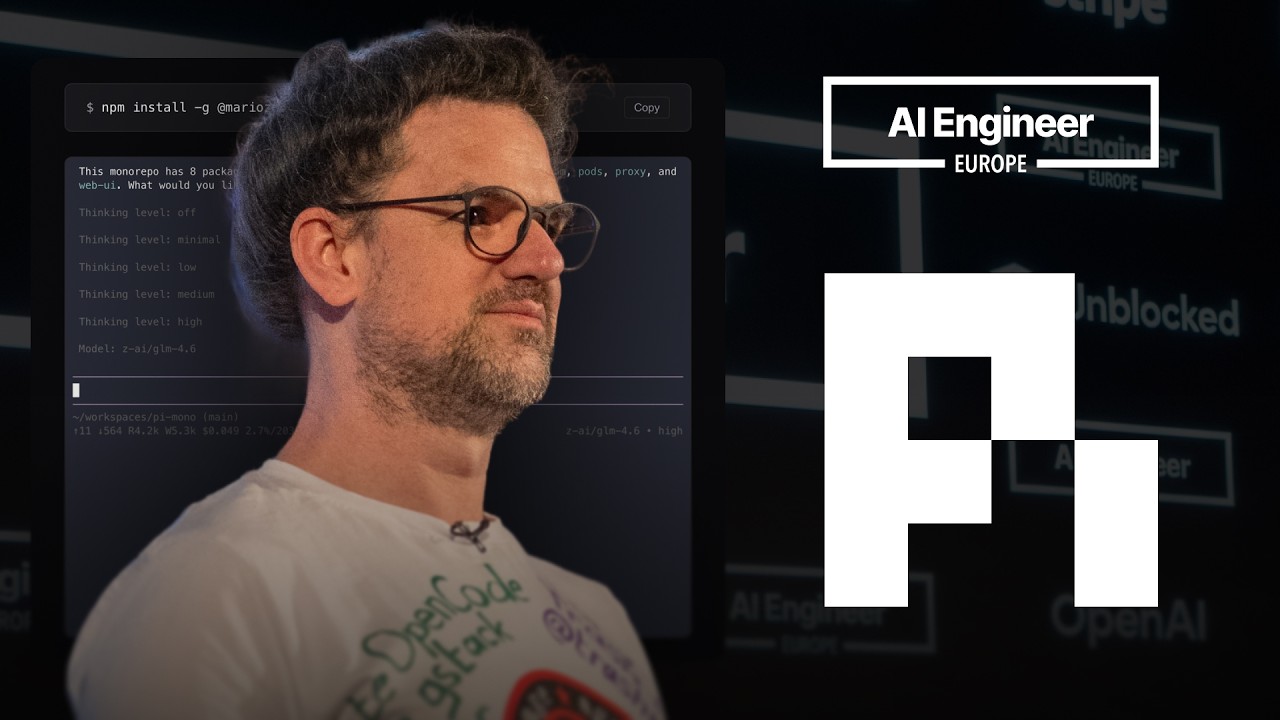

Building pi in a World of Slop — Mario Zechner

Mario argues that current AI coding culture is drowning in “slop”: too much generated code, too little understanding, too many brittle abstractions, and agent tools that hide or mutate context. His answer is pi: a minimal, malleable coding-agent harness where the user and agent control the workflow instead of being boxed into Claude Code/OpenCode-style assum

ANOTHER Open Source Repo Just Cloned Claude Design

Open Design is an early but credible open-source, GUI-based alternative to Claude Design: essentially Huashu Design plus a polished interface, agent-harness flexibility, built-in design systems, and media-provider hooks. It is not as mature or fast as Claude Design yet, but it already covers enough of the prototype/deck workflow to matter — especially for us

Andrej Karpathy: From Vibe Coding to Agentic Engineering

Karpathy’s central claim is that AI coding has crossed from “helpful autocomplete” into a new engineering substrate: LLMs are becoming a programmable computer for broad information work, not just faster code generation. The practical shift is from writing every instruction yourself to designing context, specifications, feedback loops, and agent-native enviro

“Software Fundamentals Matter More Than Ever” — Matt Pocock

Matt Pocock argues that AI coding does not make software fundamentals obsolete. It makes them more valuable. If AI can generate code faster, then bad architecture, unclear requirements, weak feedback loops, and ambiguous language become more expensive because they let the agent create chaos at machine speed. His practical message is: > Code is not cheap. Bad